Once the birds discover the free buffet, they make the most of it. Chickadees and titmice bounce back and forth between the feeder and nearby trees, transferring one seed at a time. I’ve seen cardinals and grosbeaks lounge at the feeder for hours. With the camera set to take a photo every time its passive infrared (PIR) sensors are triggered, I’d collect hundreds of images each day – often of the same bird in slightly different poses. I’d usually keep only the best one or two. Manually sorting through this avian version of America’s Next Top Model was exhausting.

I wanted a way to automatically group similar images, then choose the best ones from each set. I tried a free software for removing duplicate photos, but it lumped 80% of the pictures into a single group. Since it couldn’t tell birds apart, I’d still need to manually filter for rare snaps, like those of the Baltimore Oriole. This wasn’t going to work.

This was my first prompt within ChatGPT*:

I have downloaded a bunch of pictures from a trail cam into a folder on my computer. I would like to go through these pictures and sort them using some python code. First - I need the pictures sorted into folders by type of bird - if there are no birds, it should go into the folder "None" and if there are multiple birds it should go in the folder "Multi". Then within each folder, I need the photos grouped by similarity so that when there are several similar images, I can pick the best image and reject the rest. What other information would you need to help me write this code?

Its response completely restructured the problem, splitting it into three stages: bird detection, bird classification, and sorting. As I probed further, it gave me options and sorted them by difficulty, explaining the pros and cons of each.

- Easy – use a Pre-trained API.

- Medium – using a pre-trained model locally on my computer.

- Difficult – train my own custom neural network. This would need a good set of training data, and then the time and expertise required to train the model.

It felt like a different language – what on earth were APIs and how was I supposed to choose one? I wanted to see how far I could get relying on ChatGPT only. I decided against Option 1 since I didn’t want to send my images to an external service over the internet. There were also (minor) costs and limits associated with using APIs. Option 3 was off the table as I didn’t have much of a training set. Option 2 seemed like the sweet spot.

Ever supportive, ChatGPT suggested using YOLOv8 for detection, MobileNet/EfficientNet for classification and provided the necessary code. Taking a closer look at the imagenet_classes (database of annotated images used in computer vision) file it had me download, I saw that the list included everything from tiger_shark to forklift. It seemed too broad, and not specific to birds or even wildlife. I had a sinking feeling that I would end up stuck if I went down this path. I shared my concerns with ChatGPT, and it deftly redirected me to Option 3 – producing the required code before I could change my mind. We* went back and forth before ChatGPT understood that I had no training set, and I understood what a good one would look like.

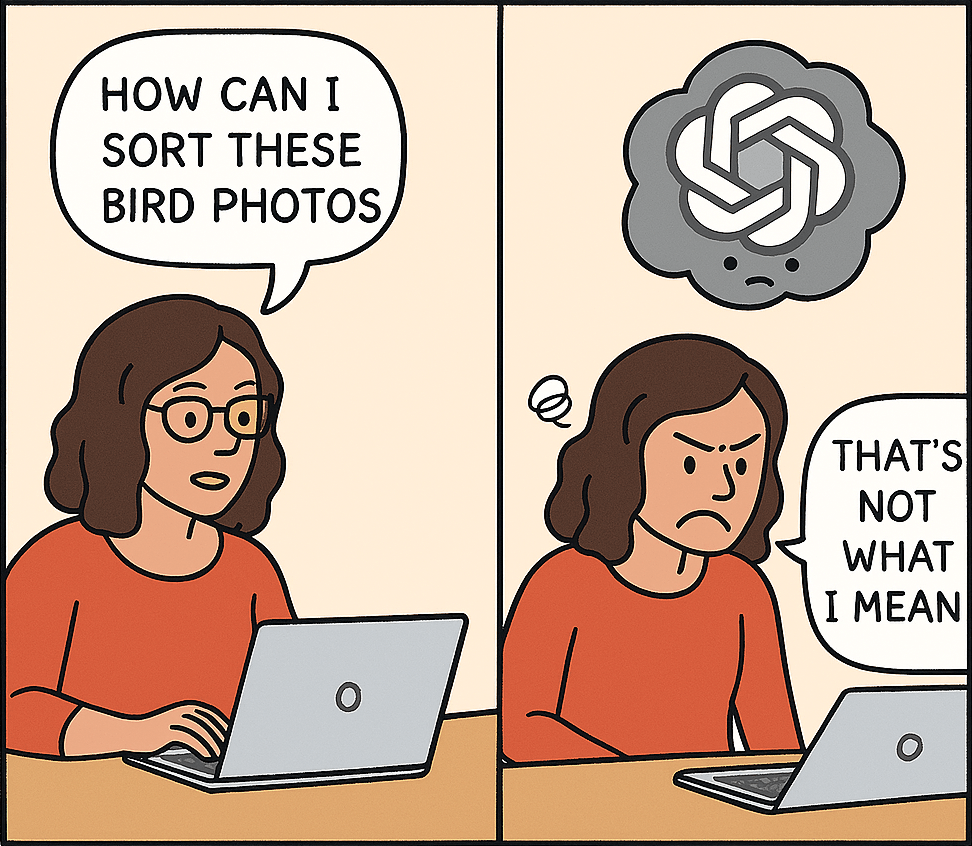

For what it’s worth, at the time of writing it doesn’t understand images. Just look at that speech bubble in the second panel. My glasses seem to have disappeared too. And why is a ChatGPT cloud hovering over my head?

I was feeling overwhelmed. I needed to go slower and break this into smaller steps. Perhaps it would be better to focus on building my own training set. How good was this pre-trained model anyway?

*I do in my writing refer to ChatGPT as if it’s a person. Since this is the internet I feel the need to clarify that I understand that ChatGPT, is in fact, not a person.

Previous post: Attracting good models – wildlife edition

Next post: Making progress in fits and spurts

Leave a comment